Second Uber Science Symposium: Showcasing Developments in Programming Systems and Tools

May 31, 2019 / Global

On May 3, 2019, Uber’s Programming Systems Team hosted the Programming Systems and Tools Track of the company’s Second Uber Science Symposium at our San Francisco office. The program featured a full day of talks by leading researchers and practitioners in the programming systems and tools area, hailing from institutions such as MIT and Berkeley to companies such as Google and Twitter.

Presentation topics included advances in automatic testing, automatic bug fixing, compilers, microservices, and machine learning. We were privileged to host seven excellent speakers, and the event itself was attended by over 100 members of academia and industry, with more than 40 in-person.

This Uber Science Symposium was the second in a series designed to bring together researchers and practitioners from different fields across academia and industry. In addition to Programming Systems and Tools, the Second Uber Science Symposium included two parallel tracks with full-day programs: Behavioral Science and Bayesian Optimization. The First Uber Science Symposium, held on November 28, 2018, focused on Deep Learning, Reinforcement Learning, and Natural Language Processing/Conversational AI.

Talks in the Programming Systems and Tools Track ranged from the eminently practical to the conceptual to the disruptive. Among other things, attendees learned how Twitter uses the Java Virtual Machine at scale, gained insight into how human reasoning can be emulated for software verification, and looked at a potential future where machine learning could fundamentally change software development.

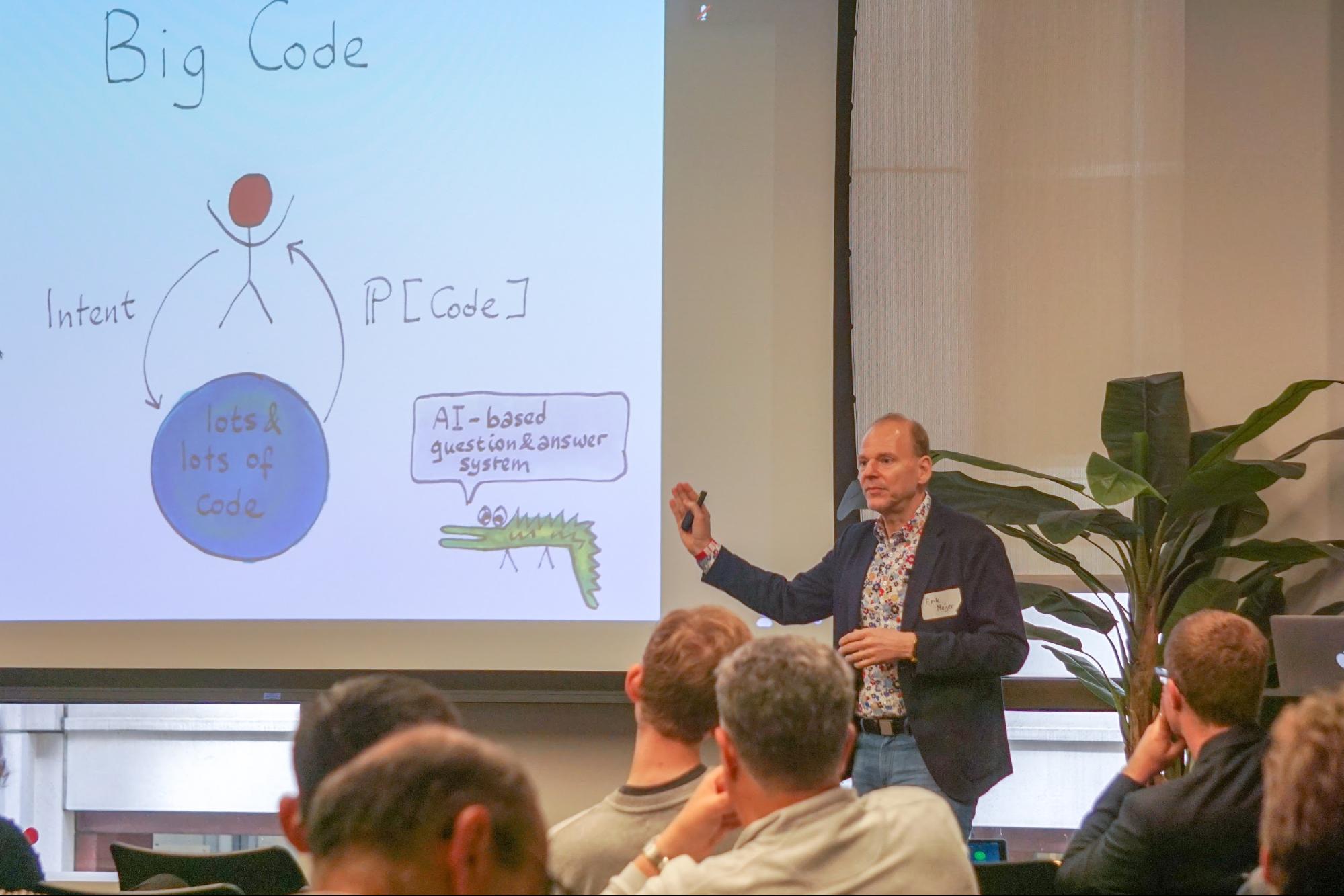

Keynote: Software is Eating the World, but ML is Going to Eat Software

In his keynote for the track, Erik Meijer, Director of Engineering at Facebook, discussed how machine learning has the potential to fundamentally change how software is constructed, and walked attendees through opportunities for leveraging machine learning to improve more conventional developer tools, compilers, IDEs, and continuous integration systems. Erik’s presentation detailed the work Facebook’s Developer Infrastructure team has done to improve developer efficiency and resource utilization at Facebook, from updating the Hack programming language to support probabilistic programming techniques to developing a new suite of AI-driven developer tools. He described their lessons learned along the way, as well as future opportunities his team foresees to optimize or auto-tune other common pieces of developer infrastructure with machine learning.

Bird-friendly Coffee: How Twitter Works to Make the JVM Work for Twitter

Ian Brown, a senior engineering manager at Twitter, discussed Twitter’s 2012 migration from a monolithic Ruby on Rails app to a fine-grained microservices mesh running on top of the JVM. In his talk, Ian explored some of the challenges and opportunities in running hundreds of services on many thousands of JVMs, and surveyed the current work and future directions of Twitter’s JVM R&D efforts.

Does Anyone Really Know What Time It Is: Google’s GoodTime Project

John Pampuch, a senior manager at Google, presented work from Google’s Java team on identifying potential errors in Java API use patterns. In particular, John discussed how Java’s various time APIs have historically been prone to misunderstanding and misuse, and outlined opportunities for improving them. According to John, the newest APIs (java.time in Java 8) are a major upgrade, but the nature and complexity of time and time APIs still plagues many developers.

To remedy these problems, Google’s open source tool, Error Prone, can be utilized to specify and enforce checks, guiding application developers to correctly use more clear APIs and encourage library developers to expose APIs that more clearly represent instances and durations. John also talked about evaluating a new paradigm for library/API delivery and a plan to apply this paradigm to future Java library development.

Learning to Reason about Programs

Mayur Naik, Associate Professor of Computer and Information Science at the University of Pennsylvania, presented his experience in incorporating artificial intelligence techniques such as Bayesian networks, continuous optimization, neural graph embeddings, and reinforcement learning in computer-aided approaches to analyzing complex programs. The goal of his work is to improve program verification and bug discovery by emulating the human ability to learn from past experiences, discover patterns, and avoid repeating mistakes. The resulting approaches, Mayur suggests, stand to make a quantum leap in reducing human effort and vastly improve programmer productivity and software quality.

A Single Idea in Compiler Goes a Long Way in ML: Generalized Redundancy Removal for Machine Learning

Xipeng Shen, Professor of Computer Science at NC State University, talked about his work on the generalization of a single idea in a compiler, in this case, redundancy removal, and how it led to a whole set of novel techniques that help halve DNN training and inference times, enable the power-efficient concurrent training of thousands of DNN models, and make it faster to find a well-pruned DNN by a factor of 173 with neither quality loss nor the unnecessary addition of additional computing resources. In his presentation, Xipeng also described Egeria, a framework for automatic synthesis of HPC advising tools through multi-layered natural language processing.

Automatically Patching Defects in Software Systems

Martin Rinard, Professor of Electrical Engineering and Computer Science at MIT, presented two techniques for automatically patching software defects. Both techniques leverage the enormous amount of software and software revision histories produced by open source software development efforts. The first technique locates and transfers correct code from a donor application into a recipient application to eliminate defects in the recipient. The second technique generates and searches a space of potential patches, using a model of correct code learned from previous successful patches to guide the search. Martin also discussed his experimental results, highlighting the potential of these two techniques to automate the elimination of many defects.

Automated Test Generation: A Journey from Symbolic Execution to Smart Fuzzing and Beyond

Koushik Sen, Professor of Computer Science at the University of California, Berkeley, presented an overview of his past and recent work on automated test generation using symbolic execution and fuzzing. He described how concolic testing and various flavors of fuzzing, including mutational and feedback-directed fuzzing, have found numerous critical faults, security vulnerabilities, and performance bottlenecks in mature and well-tested software systems. He also discussed a new technique called constraint-directed fuzzing, which, given a pre-condition on a program as a logical formula, can efficiently generate millions of test inputs satisfying the pre-condition.

If developing the next generation of Uber’s programming systems and tools interested you, consider applying for a role on our team!

Acknowledgements

We want to thank all our speakers and attendees for joining us, as well as our Uber Science Symposium general chairs and the entire Programming Systems Team for helping to make this a great event!

Adam Welc

Adam Welc is a Senior Engineer at Uber's Programming Systems Team, where he currently works on application analysis and performance tuning as well as on developing tools to improve developers' experience. More generally, his professional interests are in the area of programming language design, implementation, and tooling with specific focus on run-time system and compiler optimizations. Adam has over ten years of experience working with with different types of virtual machines (ART, HotSpot JVM, AVM, ORP JVM, Jikes RVM, J9 JVM), compilers (GreenMarl, ASC, StarJIT), and other large and complicated frameworks and systems (ProGuard, D8, ReDex, Truffle framework, STM runtime for Intel's C/C++ compiler). He holds a PhD in Computer Science from Purdue University.

Posted by Adam Welc

Related articles

Meet the 2020 Safety Engineering Interns: COVID Edition

October 29, 2020 / Global

Most popular

Balancing HDFS DataNodes in the Uber DataLake

Uber Eats at Dodger Stadium

Model Excellence Scores: A Framework for Enhancing the Quality of Machine Learning Systems at Scale

The Easter Shop and Pay with Uber Eats Gift Card Sweepstakes Official Rules

Products

Company